A new MOX Report entitled “Neural Markov chain Monte Carlo: Bayesian inversion via normalizing flows and variational autoencoders” by Bottacini, G.; Torzoni, M.; Manzoni, A. has appeared in the MOX Report Collection.

Check it out here: https://www.mate.polimi.it/biblioteca/add/qmox/21-2026.pdf

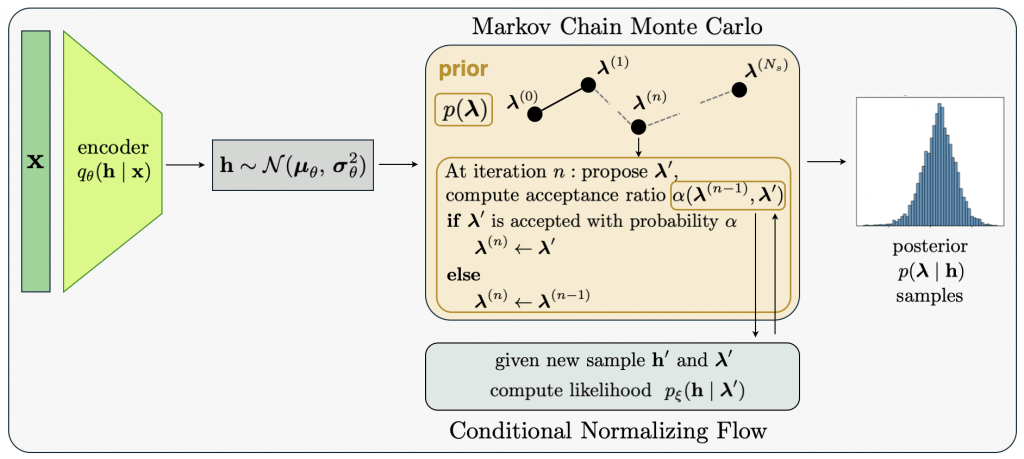

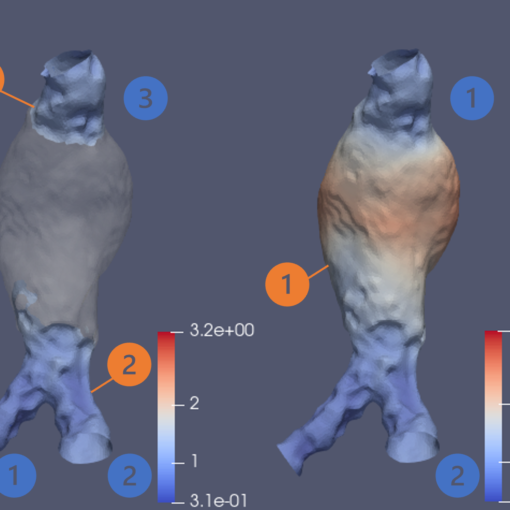

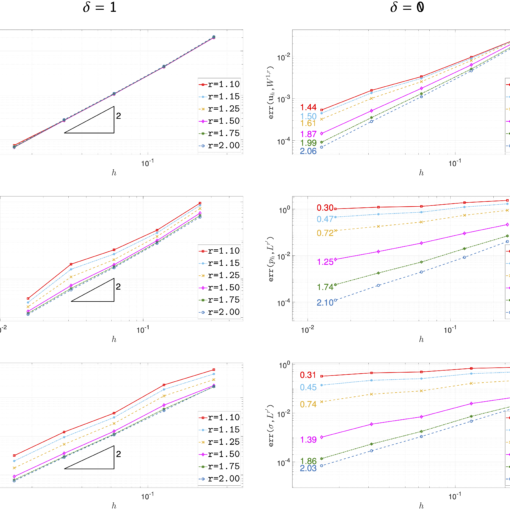

Abstract: This paper introduces a Bayesian framework that combines Markov chain Monte Carlo (MCMC) sampling, dimensionality reduction, and neural density estimation to efficiently handle inverse problems that must be solved multiple times and are characterized by intractable or unavailable likelihood functions. The posterior probability distribution over quantities of interest is estimated via differential evolution Metropolis sampling, empowered by learnable mappings. First, a variational autoencoder performs probabilistic feature extraction from observational data. The resulting latent structure inherently quantifies uncertainty, capturing deviations between the actual data-generating process and the training data distribution. At each step of the MCMC random walk, the algorithm jointly samples from the data-informed latent distribution and the space of parameters to be inferred. These samples are fed into a neural likelihood estimator based on ! normalizi ng flows, specifically real-valued non-volume preserving transformations. The scaling and translation functions of the affine coupling layers are modeled by neural networks conditioned on the unknown parameters, allowing the representation of arbitrary observation likelihoods. The proposed methodology is validated on two case studies: structural health monitoring of a railway bridge for damage detection, localization, and quantification, and estimation of the conductivity field in a steady-state Darcy’s groundwater flow problem. The results demonstrate the efficiency of the inference strategy, while ensuring that model-reality mismatches do not yield overconfident, yet inaccurate, estimates.